Building the Factory: Automating Your Data Pipeline

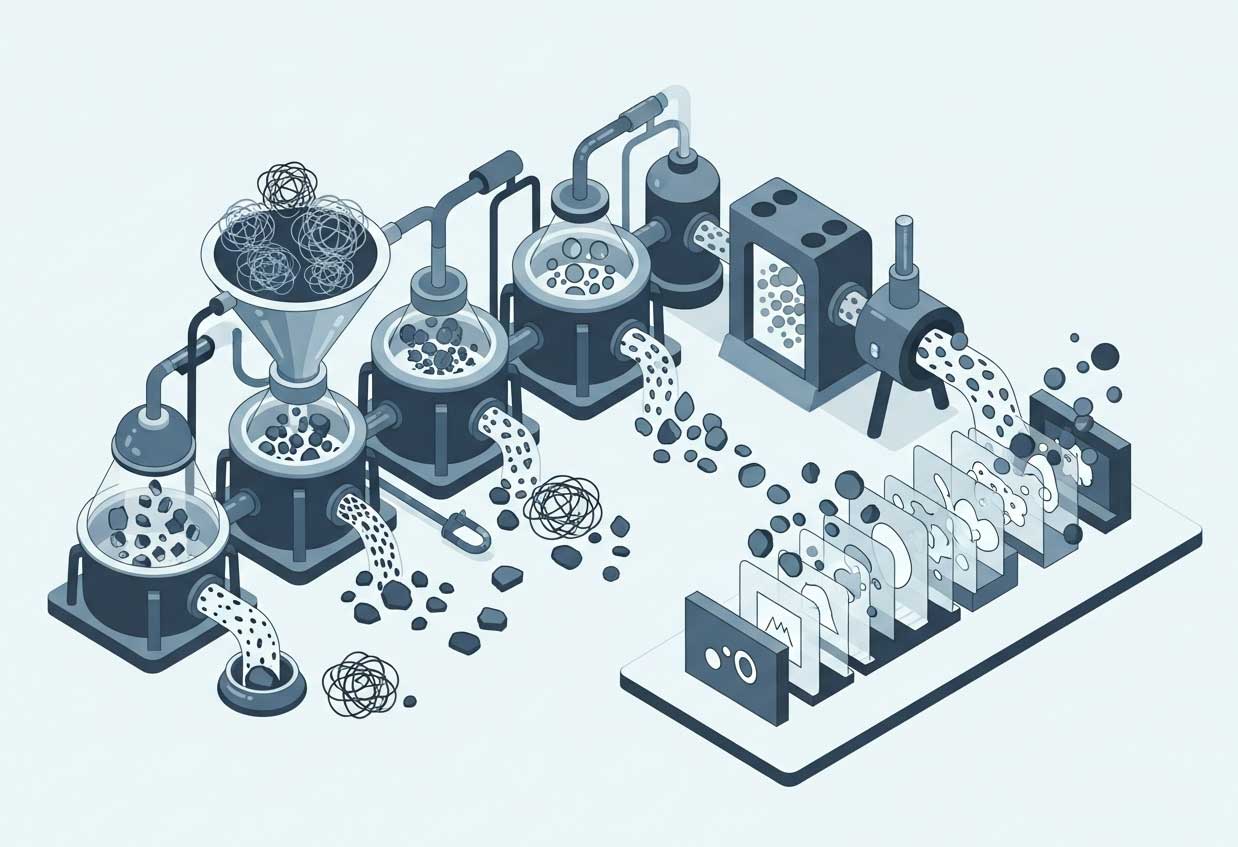

We have reached the end of our journey. Over the last six posts, we have taken a raw, messy dataset and transformed it into a clean, labelled, engineered, and properly split asset ready for modelling.

But if you did all of this manually—running cell after cell in a Jupyter Notebook, dragging CSV files between folders, and manually tweaking variables—you have a problem.

What happens when new data arrives next week? Do you repeat every single step by hand?

That is not a system; that is a hobby. To build a robust Machine Learning product, you need to move from a “craftsman” mindset to a “factory” mindset. You need Automation.

The Problem with Manual Notebooks

Jupyter Notebooks are fantastic for experimentation (the EDA phase). They are terrible for production.

-

Reproducibility: If you ran cell #4 before cell #2, your results are unique to that specific moment. No one else can replicate them.

-

Scalability: You cannot automate a notebook to run every night at 3 AM when new data arrives.

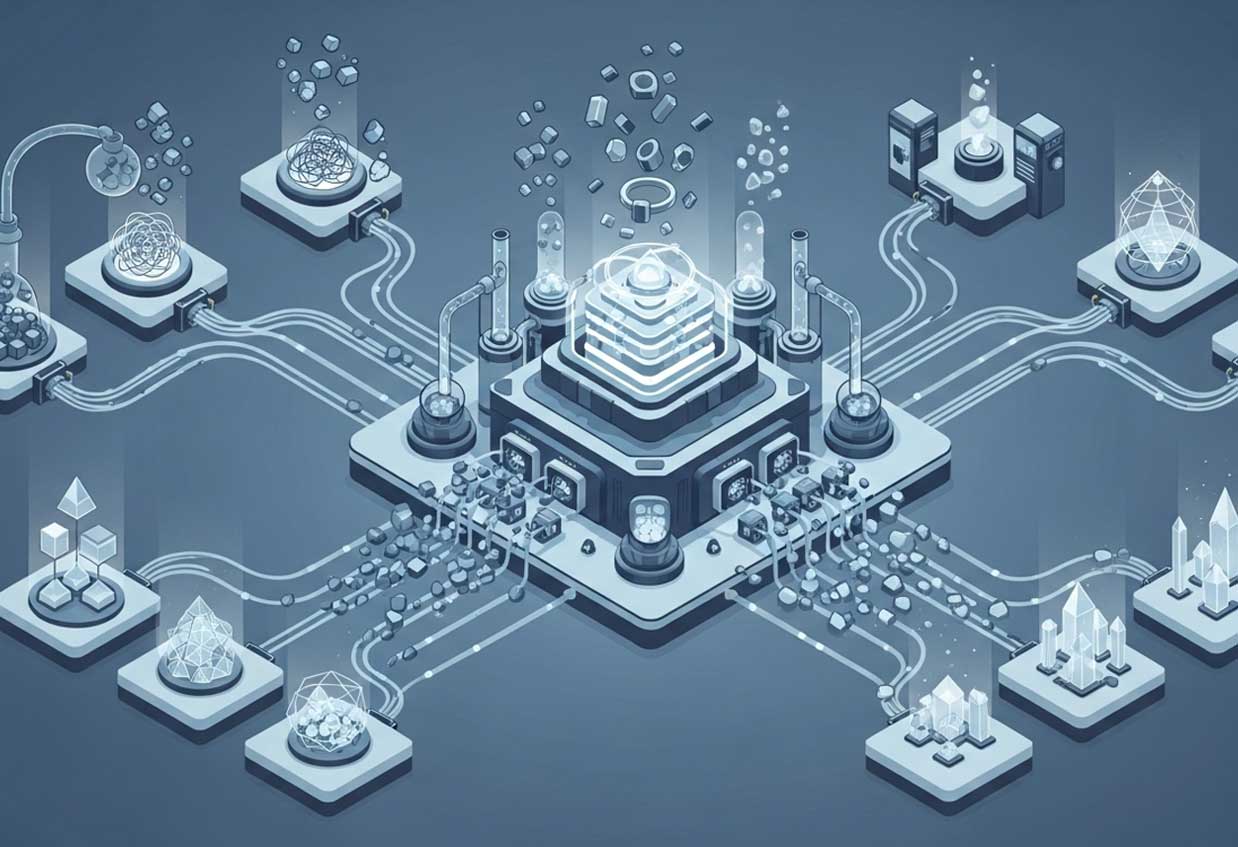

The Solution: Scikit-Learn Pipelines

The first step toward automation is chaining your steps together. Tools like Scikit-Learn offer a feature called Pipeline.

A Pipeline allows you to bundle your preprocessing steps (Imputation $\rightarrow$ Scaling $\rightarrow$ Encoding $\rightarrow$ Model) into a single object. When you call fit() on the pipeline, it applies all these transformations in the correct order automatically.

This ensures that whatever you do to your Training data is applied identically to your Test data (and new live data), eliminating many of the accidental leakage risks we discussed in Part 6.

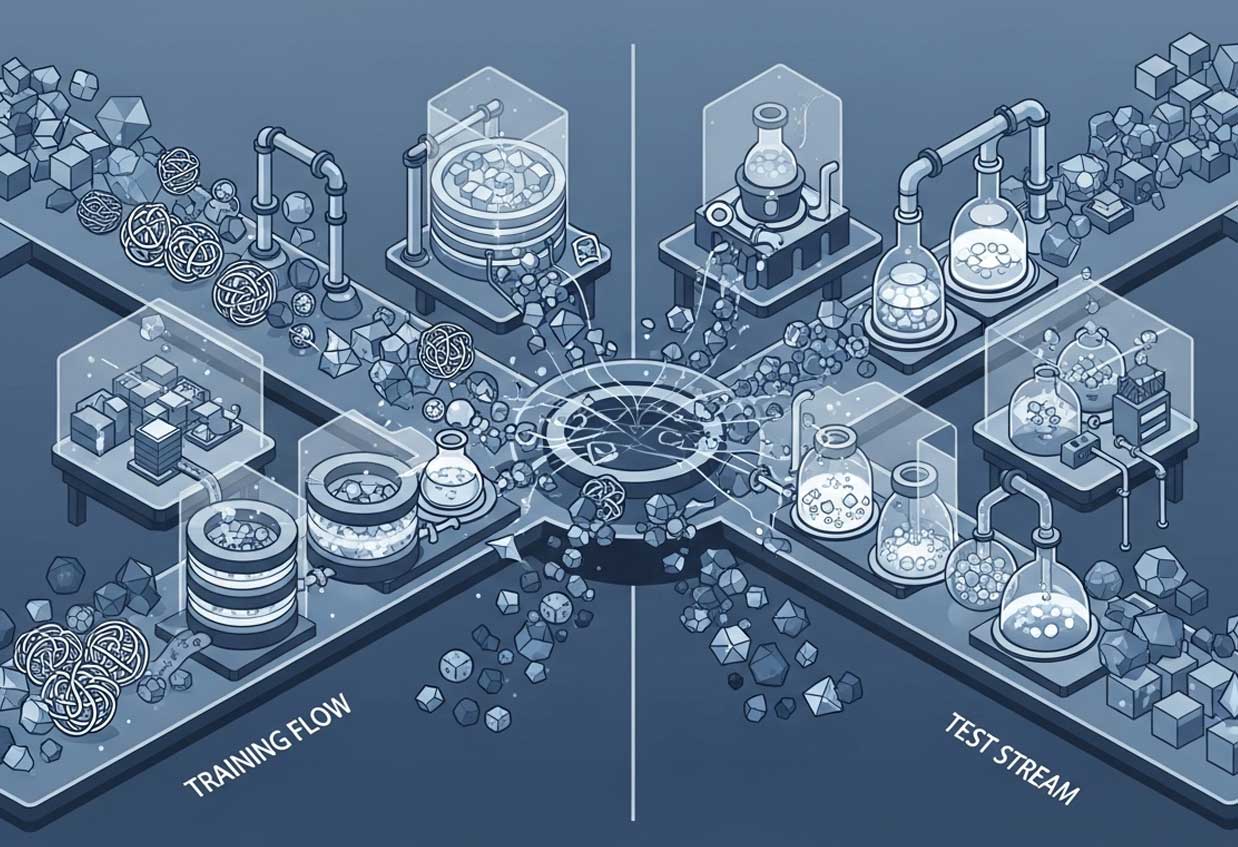

Feature Stores: The Single Source of Truth

In large organisations, different teams often unknowingly build the exact same features. The marketing team calculates “Customer Lifetime Value” one way, and the sales team calculates it another way.

A Feature Store solves this. It is a centralised repository where engineered features are stored, documented, and versioned.

-

Offline Store: Used for training historical models.

-

Online Store: Used for serving real-time predictions with low latency.

By using a Feature Store, you ensure that the “Customer Lifetime Value” used to train the model is mathematically identical to the one used in the app.

Version Control for Data (DVC)

You likely use Git to version control your code. But what about your data? If you retrain your model today, can you prove exactly which dataset was used?

Tools like DVC (Data Version Control) allow you to track changes in your data just like you track changes in code. If a model starts failing, you can “checkout” the exact version of the data that was used two months ago to debug the issue.

Conclusion: The “Raw to Ready” Journey

Machine Learning is not just about the model. It is about the system that feeds the model.

-

We started by defining the problem and checking Lineage (Part 1).

-

We established a Ground Truth with robust labelling (Part 2).

-

We explored the data with EDA (Part 3) and cleaned data (Part 4).

-

We enriched it through Feature Engineering (Part 5) and split test it for safely (Part 6).

-

Finally, we wrapped it all in an Automated Pipeline (Part 7).

By mastering these steps, you stop relying on luck and start building AI systems that are reliable, scalable, and genuinely valuable.

Ready to start? Pick a dataset, open your IDE, and begin building your pipeline today. The code is waiting.