Don’t Cheat: Proper Splitting and Avoiding Data Leakage

We have now arrived at the most dangerous phase of the data preparation pipeline.

You have collected, labelled, cleaned, and engineered your data. It looks perfect. You train your model, and it achieves 99% accuracy. You pop the champagne.

Then, you deploy it to the real world, and it fails miserably.

Why? Because you unknowingly cheated. You fell victim to Data Leakage.

The first rule of machine learning is that you never judge a model on the data it studied. That is like giving a student the exam questions properly before the test.

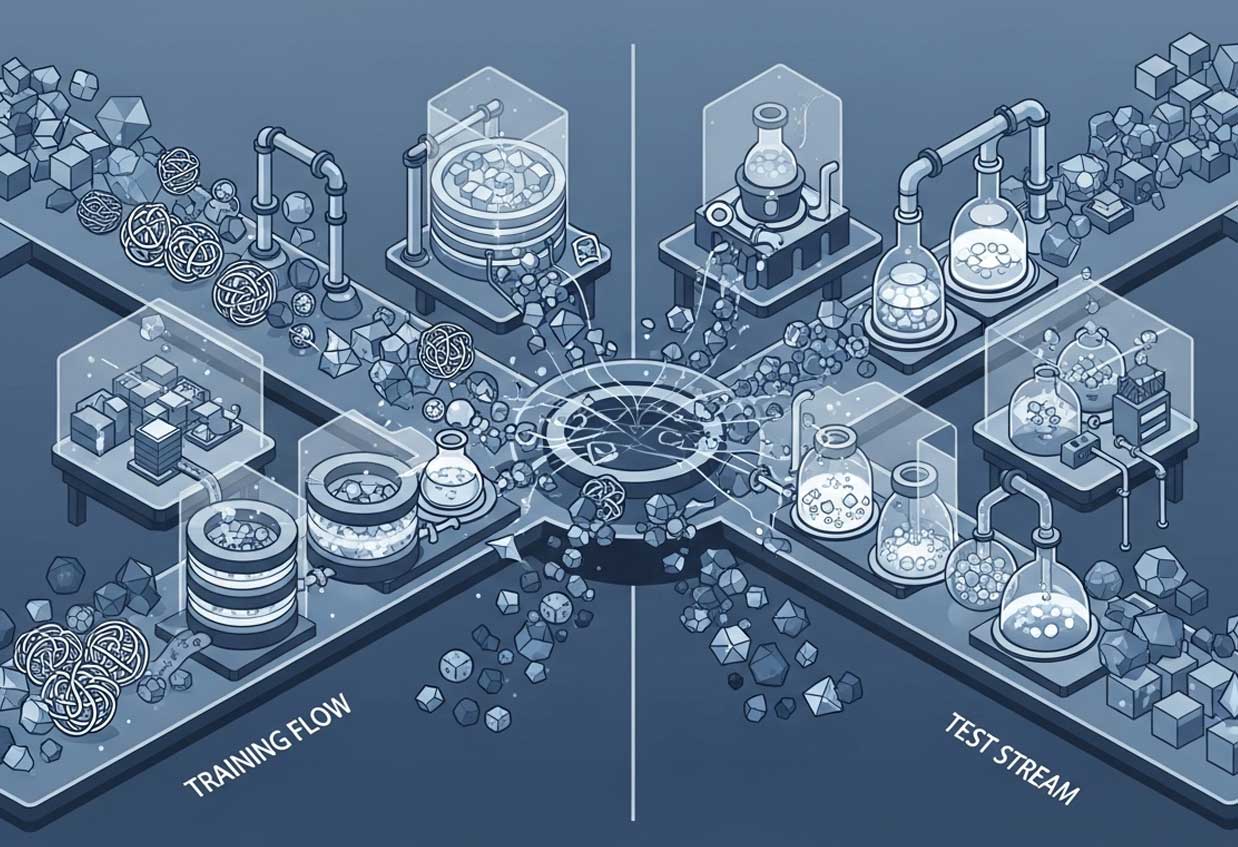

To prevent this, we split our data into three distinct sets:

-

Training Set (The Textbook): The model sees this data and learns from it. This is usually 70–80% of your data.

-

Validation Set (The Mock Exam): Used during training to tune settings (hyperparameters). The model doesn’t learn directly from this, but we use it to guide our decisions.

-

Test Set (The Final Exam): This data is locked away in a vault. The model never sees it until the very end. It is the only true measure of how your model will perform in the real world.

Understanding Data Leakage

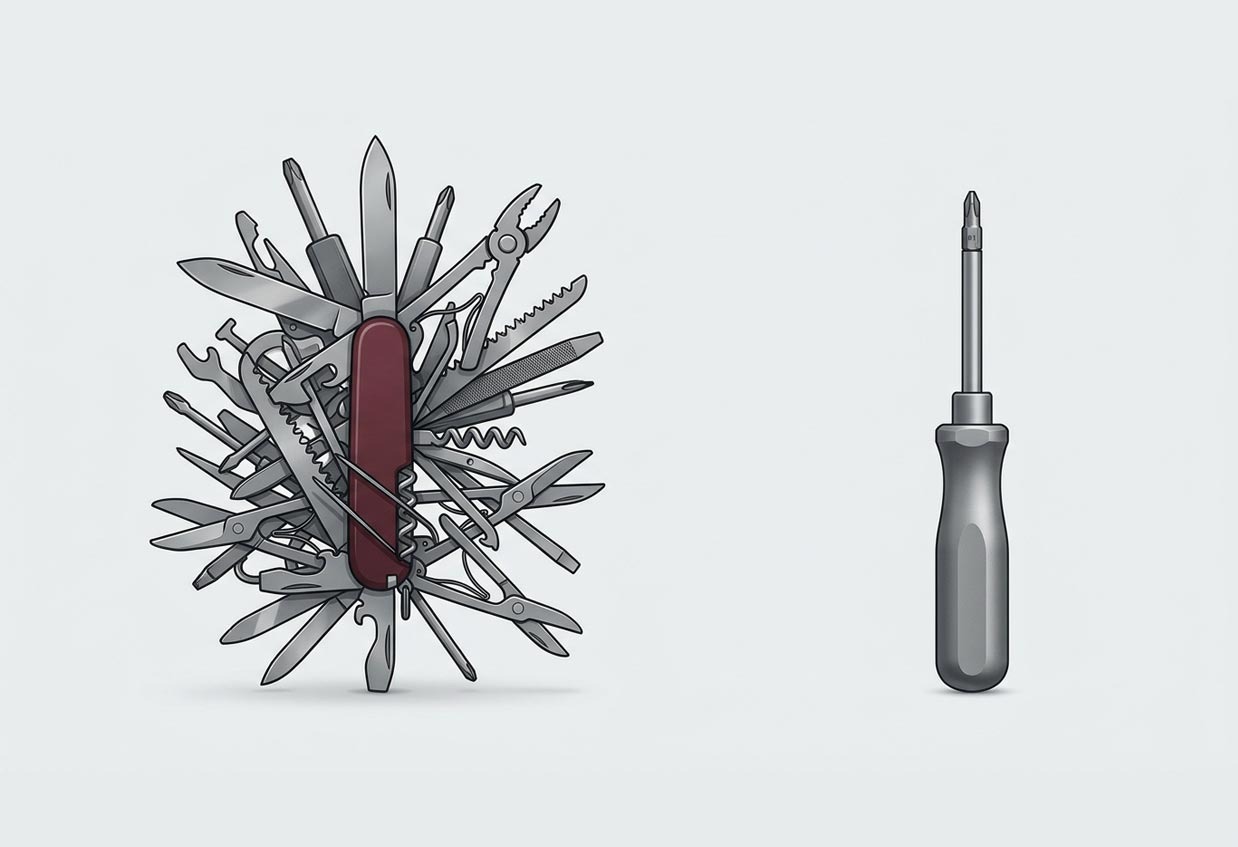

Data Leakage occurs when information from outside the training dataset is used to create the model. It essentially means your model has access to the future.

Example 1: The “Future” Feature

Imagine you are predicting whether a customer will cancel their subscription (churn). Your dataset includes a column called “Cancellation Date.”

If you leave this column in your training data, the model will instantly learn: “If there is a date here, the customer churned.” It gets 100% accuracy. But in the real world, active customers do not have a cancellation date yet. The model is useless because it relied on information it won’t have at the moment of prediction.

Example 2: Improper Splitting in Time Series

If you are predicting stock prices, you cannot simply shuffle the data randomly.

If you shuffle, your Training Set might contain data from tomorrow, while your Test Set contains data from yesterday. The model will learn to use tomorrow’s news to predict yesterday’s price. The Fix: For time-series data, you must split chronologically. Train on January–March, test on April.

Stratified Sampling

Finally, be careful with rare events. If you are detecting a rare disease that only occurs in 1% of patients, a random split might result in zero cases of the disease ending up in your Test Set.

To fix this, we use Stratified Sampling. This forces the split to maintain the same percentage of target classes (e.g., 1% positive, 99% negative) across both the Training and Test sets.

Next up: In the final part of our series, we look at how to stop doing this manually. Part 7 covers Automation and Pipelines.

Get in touch to talk to a data engineering expert